By 2026, artificial intelligence no longer surprises anyone with its ability to generate text, drive cars, or optimize logistics. But there is one frontier that still divides experts, businesses, and regulators: emotional AI. These are systems designed to read and respond to human feelings — not just words or actions, but tone, micro-expressions, heart rate, and even behavioral context.

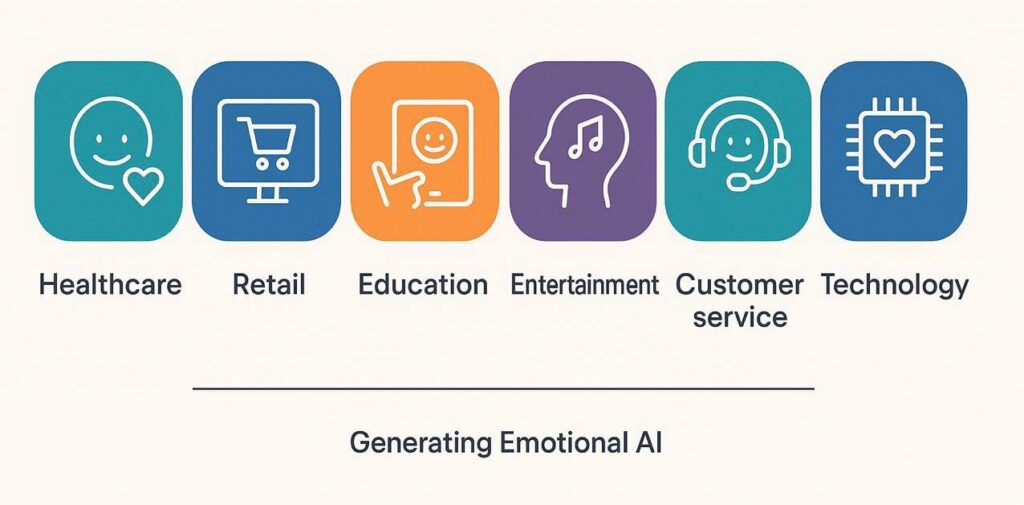

For some, emotional AI represents the next great leap: technology that could make healthcare more compassionate, classrooms more engaging, and customer service less frustrating. For others, it is a dangerous illusion — a machine pretending to feel, trained to manipulate rather than to understand.

The stakes are enormous. Analysts forecast the global market for affective computing to surpass $90 billion by 2030, with applications ranging from cars that prevent accidents to mental-health assistants. Yet the same systems could also be deployed in surveillance states, in manipulative advertising, or in workplaces where every frown is logged and analyzed.

Healthcare & education innovators seeking new engagement tools.

Compliance officers concerned with emotional data risks.

Product managers and UX leaders building next-gen experiences.

Investors tracking the fastest-growing segment of AI.

- Emotional AI is moving beyond sentiment analysis into multimodal emotional intelligence.

- By 2030, the global emotional AI market may exceed $90B.

- Healthcare, education, and customer service are leading adoption.

From Sentiment Analysis to Emotional Intelligence

The story of emotional AI begins not with empathy but with statistics. In the 2010s, businesses experimented with sentiment analysis: algorithms that classified tweets or reviews as positive, negative, or neutral. Useful, yes, but shallow. By the mid-2020s, advances in multimodal AI made it possible to combine facial recognition, speech analysis, physiological sensors, and contextual data into something richer — a portrait of how a person might be feeling.

Today’s systems don’t just say “this customer is angry.” They estimate frustration levels on a scale of 1 to 10, predict whether that frustration will escalate, and recommend interventions. In cars, emotion-aware sensors track eyelid movement and heart rate variability to detect fatigue before drivers themselves notice. In call centers, AI listens for irritation and suggests calming scripts to human agents.

What has changed is the ambition: moving from describing emotions to predicting emotional trajectories — when irritation becomes anger, when hesitation turns to trust.

The Business Promise: Billions in Value

Emotional AI is no longer a laboratory experiment; it is a commercial force. Analysts project that it could generate billions in economic value across industries. Healthcare providers are already testing systems that detect depression through voice changes or track stress through wearable devices. Retailers are using it to measure customer sentiment in real time, adjusting offers on the spot.

In education, platforms adapt lessons when students show signs of frustration, while entertainment companies design games and media experiences that respond directly to the player’s mood. For companies, the value is not only in efficiency but in building deeper relationships. Technology that can sense emotions creates a new level of personalization and loyalty — one that transforms users into long-term partners rather than one-time buyers.

The Risks: Bias, Manipulation, Surveillance

But the same capabilities that make Emotional AI powerful also make it dangerous. Misinterpretation of emotions across different cultures, genders, or age groups risks embedding systemic bias into critical systems. A cheerful expression in one culture may be neutral in another — yet the AI may flag it as “positive” everywhere. There is also the risk of manipulation. If an algorithm can detect vulnerability, what prevents businesses from exploiting it to sell more aggressively? Surveillance is perhaps the darkest concern. When cameras, microphones, and biometric sensors monitor feelings at work, in school, or in public, the line between helpful technology and invasive control blurs. A workplace that tracks “employee mood” may improve productivity — but it may also erode trust and autonomy.

Regulation and Governance in 2026

Governments are moving to catch up. In Europe, the AI Act places emotion-recognition systems among the highest-risk technologies, banning their use in sensitive contexts such as education or employment. In the United States, new state laws demand explicit consent when affective data is collected. Across Asia, regulators focus on transparency and disclosure, requiring businesses to explain how emotion-based algorithms reach their conclusions.

For enterprises, compliance is no longer optional. Ethical AI frameworks, bias audits, and human-in-the-loop safeguards are becoming as essential as cybersecurity protocols. Governance is the price of trust — and without trust, even the most advanced Emotional AI will fail in the market.

The Road Ahead: Augmentation, Not Replacement

Despite both excitement and fear, Emotional AI is unlikely to replace human empathy. Instead, its greatest potential lies in augmentation. Doctors may use it to flag risks earlier, but they will still provide the care. Teachers may rely on it to track engagement, but they will remain the ones who inspire. Businesses may use it to analyze customer moods, but relationships will still be built on human interaction. The future is not about machines becoming empathetic humans. It is about machines giving people more time and better information so that real empathy, strategy, and creativity can flourish.

Conclusion

Emotional AI is one of the most promising yet controversial technologies of our time. It offers billions in potential value but carries risks of bias, manipulation, and surveillance. In 2026, the winners will not be those who adopt it blindly but those who integrate it responsibly — balancing innovation with ethics, personalization with privacy, and automation with humanity.

Don’t just follow the hype. Discover the opportunities and risks of Emotional AI in business!

Contact Us!Why Ficus Technologies?

At Ficus Technologies, we believe Emotional AI should serve people, not exploit them. That is why we design solutions with governance, transparency, and compliance built in from the start. Our expertise spans healthcare, finance, education, and entertainment, helping enterprises harness Emotional AI while avoiding its pitfalls. We build systems that are not just intelligent but trustworthy — technology that augments human talent rather than replacing it.

They simulate empathy statistically, but do not experience feelings.

Healthcare, education, customer service, and automotive safety.

Exploitation of emotional data for manipulation or surveillance.

With small, low-risk pilots that include user consent, independent audits, and human oversight.