Modern digital products generate enormous volumes of data every second. User interactions, transactions, sensor signals, API requests, and system logs continuously flow through applications and infrastructure. For companies that rely on data-driven decisions, waiting hours or even minutes for insights is no longer acceptable.

Real-time analytics enables organizations to process and analyze data as it is generated. Instead of relying on traditional batch pipelines that produce delayed reports, real-time systems transform incoming events into immediate insights. This shift allows teams to detect anomalies faster, optimize operations dynamically, and create products that respond instantly to user behavior.

However, building a real-time analytics platform requires more than connecting streaming tools. It involves designing reliable architectures, managing event pipelines, and ensuring that data flows remain accurate, secure, and scalable across the entire system.

The guide is also useful for product managers and technical founders who want to understand how real-time data systems can improve user experience, operational monitoring, and strategic decision-making.

- Real-time analytics is transforming how organizations work with data.

- Streaming architectures enable continuous data processing instead of scheduled batch analysis.

- Technologies such as Apache Kafka, Apache Flink, Spark Streaming, and cloud-native event platforms power modern real-time pipelines.

What Real-Time Analytics Means Today

Real-time analytics refers to the ability to process and analyze incoming data streams immediately after events occur. Instead of storing large datasets and analyzing them later, systems evaluate each event as it flows through the platform.

This approach enables organizations to move from retrospective analysis to live operational intelligence. For example, e-commerce platforms can monitor user behavior and detect purchasing trends in real time. Financial institutions analyze transactions instantly to detect suspicious activity. Logistics companies track fleet data and optimize routes dynamically as conditions change.

Real-time analytics systems typically operate on event-driven data flows, where every interaction generates a structured event that travels through the pipeline. These events can trigger alerts, update dashboards, or activate automated decision-making systems.

As digital ecosystems become more complex, real-time analytics allows companies to maintain visibility and control across distributed systems.

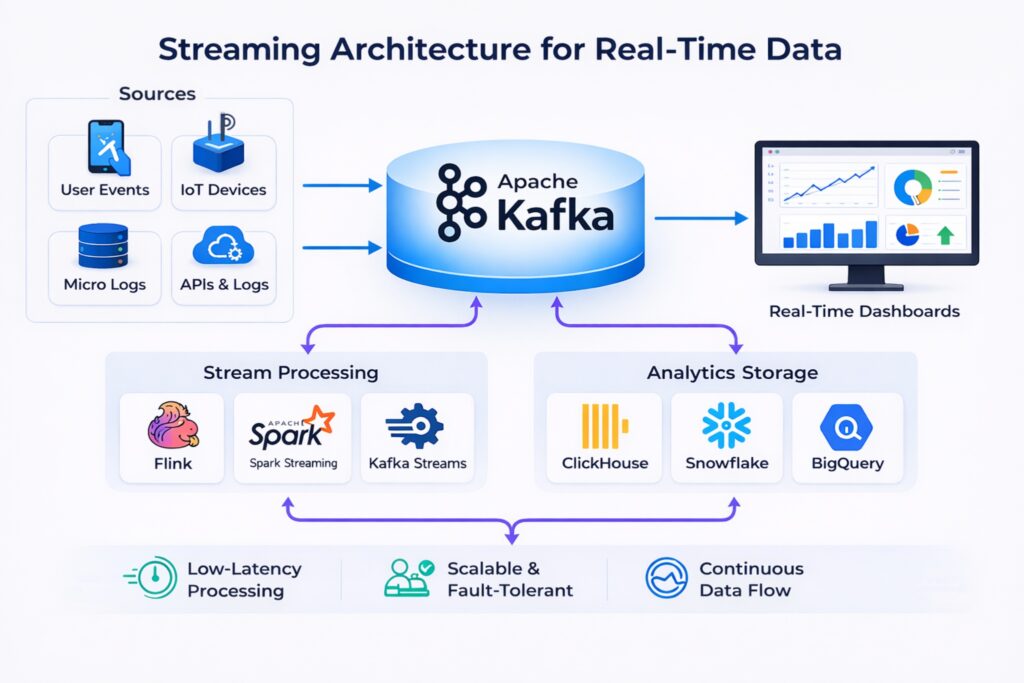

Streaming Architecture for Real-Time Data

The foundation of most real-time analytics platforms is streaming architecture. Instead of relying on periodic data exports, applications continuously publish events to streaming infrastructure.

Platforms like Apache Kafka have become central components of modern event-driven systems. Kafka enables applications to publish data streams that other services can consume, process, and analyze. Stream processing frameworks such as Apache Flink or Spark Structured Streaming then perform transformations, aggregations, and filtering in real time.

In a typical architecture, data flows through multiple layers. Events are first ingested into the streaming platform, then processed through stream processing engines. Processed data can be stored in analytical databases optimized for fast queries, such as ClickHouse, BigQuery, or Snowflake. Visualization tools and dashboards consume this processed data to provide real-time insights to users and operators.

This layered approach allows systems to remain scalable while supporting both real-time analytics and historical data analysis.

Tools Powering Real-Time Analytics

A mature real-time analytics stack often combines several categories of tools.

Streaming platforms such as Apache Kafka, Redpanda, and cloud-native event services manage the flow of data between systems. Stream processing frameworks like Apache Flink, Spark Streaming, and Kafka Streams handle transformations and calculations on live data streams.

For storage and querying, organizations often rely on high-performance analytical databases. ClickHouse has become a popular choice for real-time analytics due to its ability to handle large volumes of streaming data with low latency queries. Cloud platforms such as BigQuery or Snowflake also support streaming ingestion and hybrid analytical workloads.

Visualization and monitoring tools complete the stack. Dashboards built with Grafana, Superset, or Looker allow teams to observe system behavior and business metrics as they change in real time.

Selecting the right combination of tools depends on scale, latency requirements, and the complexity of the data pipelines.

Best Practices for Building Reliable Pipelines

Real-time systems require strong operational discipline. Without proper architecture and governance, streaming pipelines can become difficult to maintain.

One of the most important practices is designing well-structured events. Events should follow consistent schemas and versioning strategies to prevent compatibility issues between producers and consumers. Schema registries are commonly used to enforce these standards.

Observability is another essential component. Teams must monitor throughput, latency, and processing failures across the entire pipeline. Because streaming systems operate continuously, small issues can quickly propagate through the system if they are not detected early.

Fault tolerance and scalability are also critical. Systems must be able to handle spikes in event volume while preserving data integrity. Distributed streaming platforms and partitioning strategies help maintain stability under heavy load.

Finally, automation plays an important role in deployment and maintenance. Infrastructure-as-code and CI/CD pipelines help ensure that data infrastructure remains consistent and scalable as workloads grow.

Ready to turn data into real-time insights?

Contact usSecurity and Data Governance

Real-time data systems process large volumes of information that may include sensitive business or customer data. As a result, strong governance and security practices are required.

Encryption should protect data both in transit and at rest. Access to event streams and analytics platforms must be controlled through role-based access policies. Data lineage tools can help track how data flows through the system, ensuring transparency and regulatory compliance.

Organizations also implement data validation and quality monitoring to prevent incorrect or incomplete data from entering analytics pipelines. Maintaining trust in real-time analytics depends on ensuring that incoming data remains accurate and consistent.

Business Impact of Real-Time Analytics

The adoption of real-time analytics fundamentally changes how companies operate. Instead of relying on periodic reports, organizations gain the ability to react instantly to changing conditions.

Operational teams can detect issues before they escalate, marketing teams can adjust campaigns dynamically, and product teams can analyze user behavior as it happens. This continuous flow of insights supports faster experimentation and more informed decision-making.

Real-time analytics also enables new product capabilities. Personalized recommendations, automated alerts, dynamic pricing, and predictive maintenance all rely on immediate access to fresh data.

Companies that invest in real-time analytics platforms create systems that learn and adapt continuously, giving them a strong competitive advantage in data-driven markets.

Conclusion

Real-time analytics has become a key component of modern digital infrastructure. As businesses demand faster insights and more responsive systems, streaming architectures and event-driven data platforms will continue to play a central role in data engineering.

Organizations that build scalable real-time analytics systems today position themselves to respond faster, innovate more effectively, and unlock the full potential of their data.

Why Ficus Technologies?

At Ficus Technologies, we help organizations design and implement modern data platforms that support real-time analytics at scale. Our engineers combine expertise in streaming infrastructure, data engineering, and cloud-native architecture to build reliable data pipelines that transform event streams into actionable insights.

From selecting the right streaming technologies to implementing monitoring, governance, and deployment pipelines, we work closely with clients to ensure their data platforms are resilient, scalable, and ready for production workloads.

With the right architecture and operational practices, real-time analytics becomes more than a technical capability — it becomes a strategic asset that powers smarter products and faster decisions.

Batch analytics processes data in scheduled intervals, often hours or days after events occur.

Typical real-time analytics stacks include streaming platforms like Apache Kafka, stream processing engines such as Apache Flink or Spark Streaming, and analytical databases like ClickHouse, BigQuery, or Snowflake.

Not every use case requires real-time processing. Systems that rely on operational monitoring, fraud detection, IoT telemetry, or user behavior analysis benefit the most from real-time analytics. In other cases, batch analytics may still be sufficient.

Common challenges include managing high data volumes, maintaining schema consistency, ensuring fault tolerance, and monitoring pipeline latency.