Sustainability in tech has moved from aspiration to operating discipline. Regulatory pressure (e.g., new ESG disclosures), cost volatility, and the rapid rise of AI workloads have forced engineering and platform teams to treat carbon and energy as first-class constraints—right alongside latency, availability, and security. Sustainable computing is not “go slower to be greener.” It’s the practice of designing, building, and operating systems that deliver the same—or better—product outcomes while lowering emissions, water use, and total cost of ownership (TCO). This article offers a practical, modern playbook: what to measure, where impact hides, and how to deliver real reductions in 90 days without compromising SLOs. “Green IT” isn’t about slogans; it’s about engineering discipline.

Platform/Cloud leaders responsible for infra efficiency and SLOs.

AI/ML leads looking to curb training/inference cost & footprint.

FinOps/GreenOps & Sustainability officers aligning cost, carbon, and compliance.

Product owners operating data-intensive, cloud-native systems.

- Sustainable computing = same (or better) SLOs at lower carbon and TCO.

- Measure where value happens: kgCO₂e/1k requests, kWh/1k inferences, kgCO₂e/GB-month; tag resources so FinOps + GreenOps roll up together.

- Architect for efficiency: data minimization, Parquet+compression, edge/CDN, evented designs, scale-to-zero, and carbon-aware batch scheduling.

What “Sustainable Computing” Actually Means

Sustainable computing blends architecture, operations, and procurement to reduce:

- Operational emissions (mainly electricity for compute, storage, networking, and cooling).

- Embedded carbon (manufacture, transport, and end-of-life of devices and servers).

- Water and heat externalities (data center cooling).

- E-waste across client and edge fleets.

The key is to optimize for outcomes: you still hit product SLOs and developer velocity targets, but you do it with better data handling, smarter placement of workloads, energy-aware scheduling, and leaner software.

Where the Footprint Comes From (and Why It Grows)

Most emissions trace back to a few sources. First, cloud and data center energy: the power consumed by compute, memory, storage, networks, and the cooling that supports them. Second, the network path itself: heavyweight media, chatty APIs, global round-trips. Third, software inefficiency: unnecessary data, N+1 queries, uncompressed formats, unprofiled code. Fourth, AI: training and inference both scale linearly with usage and can spike suddenly during launches and growth cycles. Finally, devices: the embodied carbon in laptops, phones, edge gateways, and servers is significant and often ignored when refresh cycles are set by policy rather than by data.

Operations That Make Savings Stick (FinOps + “Ops Efficiency”)

Right-size instances, set realistic autoscaling targets, and turn off non-prod at night and on weekends. Run a daily idle cleanup: detach and delete unused volumes, IPs, buckets, topics, and dead environments. Ship behind feature flags to reduce rollbacks and duplicate traffic. Keep test data small but realistic to cut CI runtime and egress. And most importantly: put kWh and cost per unit on the same team dashboards as latency and error rate.

Architecture Patterns That Reduce Waste

Start with data. Minimize what you store and process; default to retention policies, archival storage classes, and columnar formats (Parquet/ORC) with compression (e.g., Zstandard). Push frequently reused content to the edge and cache aggressively so you don’t recompute or retransfer the same bytes.

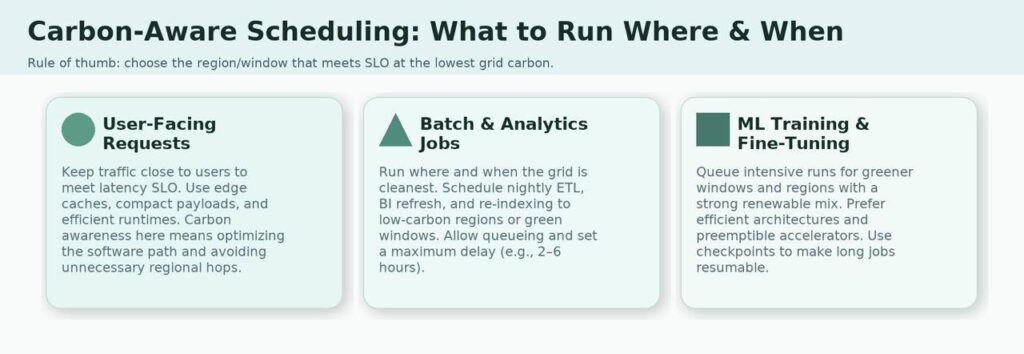

Place compute thoughtfully. Use carbon-aware schedulers for batch and analytics so jobs run in greener regions or windows without hurting user-facing latency. Prefer event-driven designs (pub/sub) to break tight coupling and idle wait states. Aim for energy proportionality—services that scale to zero when idle (serverless, jobs, on-demand workers) beat always-on clusters for spiky workloads.

Reduce network overhead. Localize traffic with regional clusters and edge functions; collapse chatty patterns into batched or streaming calls; eliminate unnecessary round-trips with well-designed GraphQL or consolidated endpoints.

Choose efficient runtimes and make profiling non-negotiable. Go, Rust, and modern JVMs can all be efficient when tuned; what matters is evidence. Wire CPU/memory/alloc regressions into CI so performance—and therefore energy—doesn’t drift.

GreenOps: Operating the Platform Like It’s Your Energy Bill

Operations is where savings stick. Right-size instances; set autoscaling targets that actually utilize resources; and turn on scale-to-zero for non-production and off-hours environments. Hunt “zombie” assets with scheduled jobs that quarantine or delete unattached disks, stale IPs, abandoned buckets, orphaned topics, and forgotten previews. Release behind feature flags to reduce rollbacks and duplicated traffic. Keep test data lean and realistic to avoid inflating storage, CI runtime, and egress. Finally, put a carbon panel on the same dashboard as latency, error rate, and spend—visibility drives behavior.

Efficient AI: The Biggest Lever, Handled Responsibly

Treat AI as a lifecycle, not a single knob.

Before training, deduplicate and curate datasets; use early stopping and Bayesian search to avoid brute-force sweeps; and pick architectures sized to the task rather than defaulting to “bigger is better.”

Instead of retraining, prefer retrieval-augmented generation (RAG) or knowledge search to inject new facts without burning GPU months. For models you own, apply distillation, pruning, and quantization (8-/4-bit, mixed precision). These techniques cut inference energy and cost while preserving accuracy for most production use cases.

At inference, batch where possible, enable speculative decoding and KV-cache reuse, set token and time limits, and choose accelerators in regions with lower carbon intensity. Track a practical KPI—kWh per 1,000 requests—next to P95 latency so teams can trade speed and efficiency consciously.

Device and Server Lifecycle

Sustainability is also procurement and fleet management. Buy against recognized standards (e.g., EPEAT/TCO), and ask vendors for life-cycle assessments. Refresh hardware based on telemetry (energy profile, failure risk, repair economics), not a fixed calendar. Give devices a second life via certified resale/donation with secure erasure. For edge fleets, centralize inventory, enforce sleep schedules, and orchestrate updates in sensible batches.

Sustainability and performance are not mutually exclusive. In fact, with the right approach, they can be deeply complementary.

Chris Carriero

Risks and Honest Trade-Offs

Three traps recur. First, latency vs. greener regions: not all workloads can move; separate user-critical paths from deferrable work and optimize each. Second, greenwashing: publish your methods (boundaries, factors, attribution rules) alongside your numbers. Third, the rebound effect: efficiency can spur usage that erases gains. Set guardrails—budget, carbon targets, or rate limits—so improvements persist.

What “Good” Looks Like by Year-End –

Carbon and cost sit next to latency and error rate on shared dashboards. Teams know their kgCO₂e per 1,000 requests and kWh per 1,000 inferences the way they know P95. Most batch work runs in greener windows; previews and non-prod idle to zero; caches cut redundant compute; and AI inference is optimized by design. Procurement extends device life when it’s cheaper and cleaner than replacement. The cumulative effect: lower bills, lower risk, and fewer surprise fires—even as you ship faster.

Conclusion

Sustainable computing is disciplined engineering. When carbon and energy become first-class signals, systems get simpler: less wasted data, fewer unnecessary trips, right-sized compute, and AI that’s sized and optimized for real use. Start with measurement, fix the loudest queues, make AI efficient, and add carbon awareness to your schedulers. You’ll ship a faster, cheaper, and cleaner product—and you’ll have the metrics to prove it.

Prefer RAG over full retraining; apply distillation/quantization; batch requests, reuse KV-cache, cap tokens, and run in lower-carbon regions when latency allows.

Separate paths: keep user-facing work near users; send batch/analytics to greener regions or green time windows. Edge caches and compact payloads minimize hops.

They help, but reductions beat offsets. Publish your methodology (boundaries, factors) to avoid greenwashing and focus on direct efficiency gains first.